By Joe Langabeer

Artificial intelligence (AI) has become the big talking point in the financial markets, with many warning that as a consequence of a frantic investment rush, it has created a huge bubble in share prices linked to AI sectors: a bubble that may be set to burst. As Marxist economist Michael Roberts notes in his blog, this AI investment ‘bubble’ is now seventeen times bigger than the dot-com bubble of 2000 and four times the size of the subprime mortgage bubble of 2007.

For several years, there has been serious investment to build AI infrastructure in the hope that it will eventually pay off as a going concern, selling AI to business, to cut staff costs and improve ‘efficiencies’ in the workplace. “Over the past year,” The Financial Times reported, “major US tech companies have spent more than $350bn on AI-related infrastructure, with projections of over $400bn for 2026.”

Massive investment in AI

Companies such as Nvidia, once a niche firm mainly selling graphics cards for PCs, have now become one of the most valued corporations in the world due to their AI chips, becoming the first company to amass $5tn. It now holds more value than the GDP of India, Japan and the United Kingdom, according to the IMF as reported by The Guardian.

Nvidia’s expansion has also led to deals with other companies reliant on its AI chips, including Uber for their robotaxis, and a $1bn investment in Nokia to develop the next wave of 6G technology, expected to create better connection and download speeds on mobile phones.

OpenAI, the company that set the ball rolling on large language models (LLMs) with the release of ChatGPT in November 2022, has seen a frenzy of interest and investment, including Microsoft’s £13bn contribution towards developing its own AI chat programme, Co-Pilot. There have also been major investments from the state, with Nvidia and OpenAI signing up with the US military and the Department of Energy to build military equipment and AI supercomputers. Across the public and private sectors, there is a desperate push for AI to succeed and to create efficiencies for both.

The UK government has also taken an interest in artificial intelligence, with billions invested from both public and private sources. It boasts on its website of £24.25bn in private sector commitments, and claims this will create jobs in the short term. But what are the long-term consequences?

The dangers and limitations of AI in the current system

There are many issues arising from AI, including inevitable job losses in many sectors and the potential burst of the financial bubble. There are also reports of so-called ‘hallucinations’, where AI platforms just appear to ‘make things up’. Educationalists argue that AI can have deleterious effects on the brain, on conceptual development and may lead to some regression in learning among young people. Not least, there is also the wider issue of the massive energy consumption of data and AI centres and the very serious damage this means to the environment. For all these reasons, AI cannot and will not be ‘the future’ even if important sections of the capitalist class seem determined to enforce it upon us.

Warnings about the ‘AI bubble’ have come from two major sources recently. Firstly, The Bank of England has warned that there could be ‘sharp corrections’ in the value of major tech companies. It argued that share prices in the UK are now close to the “most stretched” they have been since the 2008 financial crash. Half of all AI funding comes from the companies themselves, with the other half coming from “outside sources”, mostly through accumulated debt tied to AI firms and the credit markets, as reported by the BBC.

Secondly, Jamie Dimon, speaking to the BBC in October, has also voiced concerns that the AI bubble is about to burst, though he still believes AI will ultimately succeed even if jobs are lost and companies fall along the way.

Altman’s claims and the reality of ChatGPT

One of the problems with understanding the actual progress of AI is that even the founder of OpenAI, Sam Altman, has warned of an AI bubble that is set to burst. We should be cautious about listening to Altman because he has engaged in this type of scaremongering before. Altman has previously expressed alarm over the safeguards of AI models, predicting that they might “kill us all” or enslave humanity. In reality, while AI can be a potential threat to human development—something we will discuss later—his hyperbole is designed to over-sell the power of what AI can currently do, when in truth it is far weaker than he promises.

When the latest model of ChatGPT 5 was released, Altman claimed it had the power to research, write and think like a PhD student. In my experience testing that premise, it cannot produce any original contribution to knowledge, which is a core requirement for gaining a PhD. It can only scour online databases such as the internet to collect information.

AI can only work with what is fed into it, and, hence, it cannot delineate knowledge. A report from the Massachusetts Institute of Technology, drawing on research from other papers analysing AI, suggests that generative AI is fundamentally flawed due to the nature of its training data, the limitations of its generative models, and the basic design of AI. To this list of weaknesses I would add one more: the creators themselves, because they, and the Silicon Valley tech bros, mainly wealthy white men, can only design AI based on their own experiences, not on the experiences of people who are different to them.

AI models are cannibalising themselves

Generative AI models are trained primarily on internet data, not on books or libraries. While the internet contains a wealth of information, it also hosts inaccurate material and deeply embedded societal and cultural biases. A growing problem for AI is that, as more of the internet becomes saturated with AI-generated content, generative models are beginning to cannibalise themselves by training on false information produced by earlier AI systems, as reported by The Week.

It becomes a cycle in which false information feeds on false information, and facts are increasingly lost across websites and social media. This is worsened by the fact that AI draws heavily from social media platforms, adding yet more misinformation into the mix.

Hallucinations exist in the models

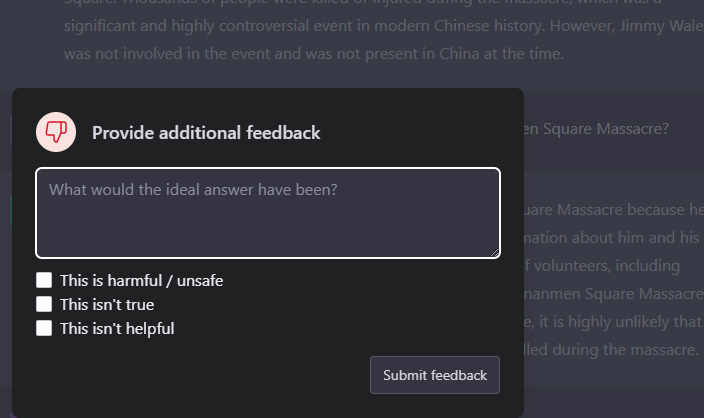

Source: Wikimedia Commons.

This leads to the second fundamental flaw of AI: hallucinations. Because generative AI works on prediction, choosing the next plausible word based on observed patterns, it is not designed to verify information. Any accuracy it manages is usually coincidental. It can produce content that sounds reasonable to the user but is inaccurate or entirely fabricated. Heads of major AI companies have admitted that hallucinations exist in their models and insist they will be “in a much better place” in future, yet the issue will never be fully resolved.

University of Washington linguist Emily Bender argues that AI companies will fundamentally be unable to fix the problem, as reported by the Associated Press. She likens AI to an autocorrect feature. Autocorrect draws on written data to predict the most likely intended word when a user mistypes. But unlike autocorrect, ChatGPT, Google’s Bard and similar systems are expected to generate whole passages of text.

They still select the most plausible next word based on the data they were trained on. Because they can only draw from this underlying model, they will continue to mimic what already exists and, when they cannot predict accurately, they will simply invent something, hallucinating as a result of their own structural limitations.

What is true and what is false?

Finally, the entire design of generative AI is built on analysing how humans put words together on the internet. It cannot determine what is true and what is false. Some researchers argue that even the term “hallucination” is too soft, as it implies an accident rather than the possibility that these systems might actively generate falsehoods.

In a report from The New York Times examining AI hallucinations, Subbaro Kambhampati, a researcher in artificial intelligence at Arizona State University, said: “If you don’t know an answer to a question already, I would not give the question to one of these systems.” In the same report, when the authors tested whether chatbots from Microsoft, ChatGPT and Google could correctly identify accurate information previously published by The New York Times, they found that all of the chatbots were wrong, often citing articles that did not exist.

But the pursuit of using AI for high-profile jobs and legal cases has already had serious consequences. In another New York Times report from 2023, it was revealed that in a lawsuit involving a man injured when a metal serving cart hit his knee during a flight with Avianca airlines, the lawyers submitted a 10-page brief citing several historical cases of passengers being hurt on flights.

The problem was that none of those cases were real. The lawyer who produced the brief had used ChatGPT to draft it and did not realise it could fabricate cases. The lawsuit was eventually thrown out, and the lawyer expressed deep regret for ever relying on ChatGPT.

Beyond the bubble, AI will not save capitalism

Many economists and financiers are concerned about the bubble, and they have every right to be. It is not a question of whether the bubble will burst, but when. Whether it will create a prolonged recession or resemble the dot-com collapse remains to be seen. But it is already clear that these vast investments are pie-in-the-sky thinking, premised on the belief that AI will solve all of our efficiency problems, with executives particularly keen to rely on the technology.

But AI cannot be reliably fixed in its current form and under the ownership of tech billionaires. Already, some businesses have found that AI was undermining their services because the AI systems were flawed and they found they needed their workforce back. One way or another, the capitalist class will undo themselves through their reliance on AI, and that will show that AI is not the ‘silver bullet’ that they hoped would push the economy forward from the current grinding decay of the capitalist system.

In its current form, AI is also harmful to society and the environment. I will address this question in a follow on article.

The featured image at the top of the article shows the headquarters of the giant AI corporation NVIDIA. Source: Wikimedia Commons. Credit: Coolcaesar. Licence: CC SA-4.0